Attention—our ability to select the most important information while filtering out distractions—is essential for daily behavior. We study the mechanisms of attention in both children and adults, including those with neurodevelopmental conditions such as autism.

Our research addresses questions such as:

- What constitutes individual differences in attention mechanisms?

- How are these differences shaped across development from childhood to adulthood?

- How do these differences arise in conditions like autism spectrum disorder?

To address these questions, we use a combination of psychophysics, eye tracking, and neuroimaging methods.

Neural mechanisms of selective attention across development

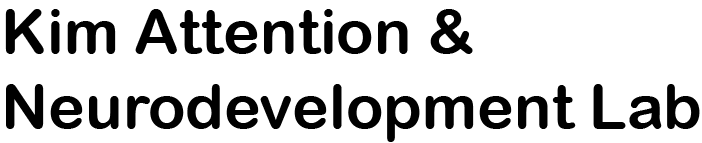

While the neural mechanisms underlying selective attention in adults have been widely studied, their developmental trajectory during childhood remains less understood. We apply a robust theoretical framework, grounded in neuroimaging and electrophysiological studies, to explore how selective attention develops in childhood.

In this line of work, we ask:

- How does the continued development of visual functions shape selective attention in school children?

- How does atypical development of visual functions influence attentions differences in neurodevelopment disorders?

- How does the development of attention interact with other cognitive skills that are rapidly changing during childhood?

- Kim, N. Y., & Kastner, S. (2019). A biased competition theory for the developmental cognitive neuroscience of visuo-spatial attention. Current Opinion in Psychology, 29, 219-228.

- Hoyos, P. M., Kim, N. Y., Cheng, D., Finkelston, A., & Kastner, S. (2021). Development of spatial biases in school‐aged children. Developmental Science, 24(3), e13053.

- Kim, N. Y., Pinsk, M. A., & Kastner, S. (2021). Neural Basis of Biased Competition in Development: Sensory Competition in Visual Cortex of School-Aged Children. Cerebral Cortex, 31(6), 3107-3121.

Characterizing attention in autism

with dynamic stimuli and accessible technology

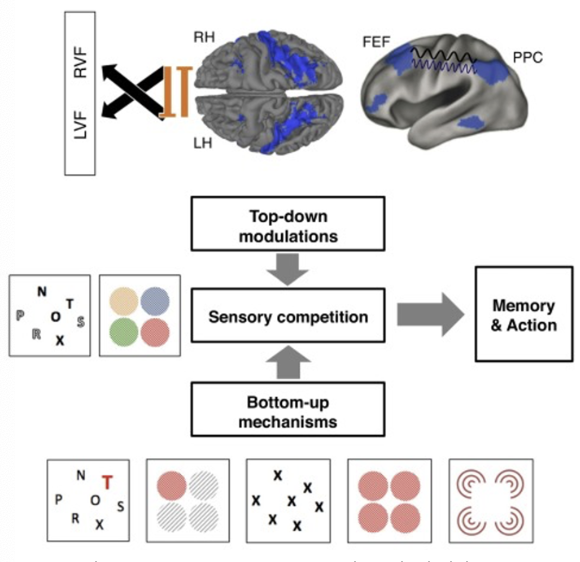

We are also interested in studying attention patterns based on eye movements in more dynamic, rich, and naturalistic contexts. For example, we examine which features capture each person’s attention. At the same time, they freely explore images (e.g., photographs of people, natural scenes, or work of art) or videos (e.g., movies, TV shows).

Importantly, we utilize innovative camera-based eye-tracking methods to overcome limitations of conventional eye tracking technology in term of accessibility and ease of use. These methods can be implemented on smartphones, tablets, and personal computers (with webcams). With these approaches, we collect both broad and dense samples—recruiting large and diverse groups of individuals and administering multiple sessions over time.

We are particularly interested in combining these naturalistic paradigms with scalable technology to study differential attention patterns in autism. Atypical attention, especially in social contexts, is one of the most widely observed behavioral characteristics of autism, with important implications for clinical screening and diagnosis. Our approach provides new insight into variability in attention across people and across time, informing the development of personalized tools for screening, diagnosis, and intervention.

In this line of work, we ask:

- What aspects or features of stimuli capture attention in each individual, and how do these differ in autistic people?

- How are attention differences in autism associated with comprehension of social dynamics?

- How stable are these attention differences over time? If greater variability is observed in a subset of individuals, what is the source of such variation? How might this variability inform clinical phenotypes?

Relevant papers:

- Kim, N. Y., He, J., Wu, Q., Dai, N., Kohlhoff, K., Turner, J., … & Navalpakkam, V. (2024). Smartphone‐based gaze estimation for in‐home autism research. Autism Research, 17(6), 1140-1148.

- Wu, Q., Kim, N. Y., Lai, A. K., Paul, L. K., & Adolphs, R. (2025). Feature-based modeling of visual attention in autism: A large-scale online eye-tracking study. Conference on Cognitive Computational Neuroscience.

- Wu, Q., Kim, N. Y., & Adolphs, R. (under revision). Model-based eye-tracking: a new window to understand individual differences and psychiatric disorders. https://doi.org/10.31234/osf.io/mg9n7_v1

Neural basis of social perception:

Insights from neuroimaging and computational models

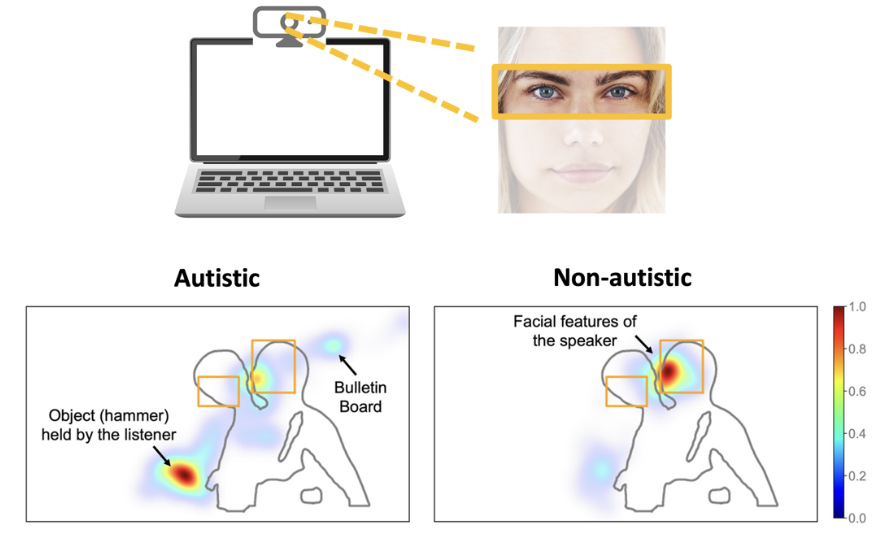

The human visual system, particularly in the inferior temporal cortex, represents category-level object information, including socially significant categories like faces and body parts. How we perceive information in these visual categories is crucial for social interactions. In this line of research, we study how social visual information, particularly from human faces and body gestures, is represented in the brain.

Relevant papers:

- Kim, N. Y., Lee, S. M., Erlendsdottir, M., & McCarthy, G. (2014). Discriminable spatial patterns of activation for faces and bodies in the fusiform gyrus. Frontiers in Human Neuroscience, 8, 1–12.

- Kim, N. Y. & McCarthy, G. (2016). Task influences pattern discriminability for faces and bodies in ventral occipitotemporal cortex. Social Neuroscience, 0919, 1-10.

- Engell, A. D., Kim, N. Y., & McCarthy, G. (2018). Sensitivity to faces with typical and atypical part configurations within regions of the face-processing network: An fMRI study. Journal of Cognitive Neuroscience, 30:7, 963-972.

- Mukherjee, K., Kim, N. Y., Alamooti, S. T., Adolphs, R., & Kar, K. (2023). Mukherjee, K., Kim, N. Leveraging artificial neural networks to enhance diagnostic efficiency in autism spectrum disorder: A study on facial emotion recognition. Conference on Cognitive Computational Neuroscience.